From ChatGPT to AI Agents: The Evolution of How We Use AI

The journey from ChatGPT conversations to autonomous AI agent teams. How AI evolved from chatbots to tool-users to agents to coordinated teams.

From ChatGPT to AI Agents: The Evolution of How We Use AI

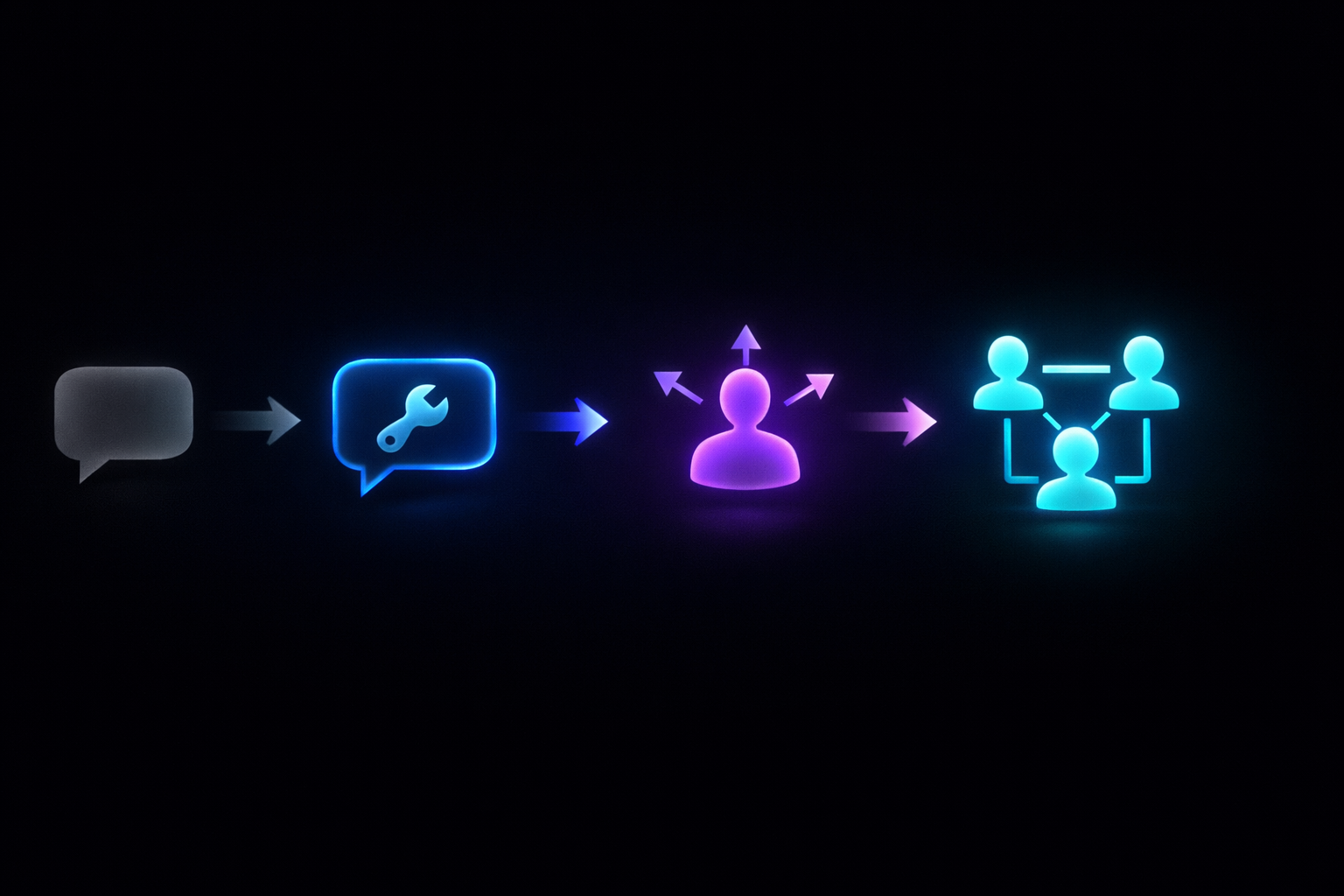

AI has evolved through four distinct phases in just 3.5 years: chatbot (2023), tool-use (2024), agent (2025), and team (2026). Each phase solved the previous one's core bottleneck. Chatbots couldn't act. Tool-using AI couldn't plan. Single agents couldn't parallelize or specialize. Today's multi-agent teams — running 4 specialized agents simultaneously for $10–30/month — represent a step function in productivity that the previous three phases couldn't achieve.

- Four distinct AI phases: Chatbot (2023) → Tool-Use (2024) → Agent (2025) → Team (2026)

- Each phase solved the previous phase's bottleneck — and built on it, not replaced it

- The Team era isn't about smarter models — it's about better system design: specialization + parallel execution

- The jump from chatbot to agent team is a productivity step function, not an incremental improvement

- Your role keeps shifting: writer → operator → manager → executive

In November 2022, ChatGPT launched and changed everything. Three and a half years later, the way we use AI is almost unrecognizable. We went from typing questions into a chatbox to running teams of autonomous AI agents that code, write, research, and deploy — coordinating with each other while we focus on the work that actually requires a human brain.

Understanding these four phases — also called the AI agent evolution, history of AI agents, or future of AI assistants — helps you see where we are, where we're going, and how to position yourself ahead of it.

Phase 1 — 2023: The Chatbot Era

The paradigm: One human, one AI, one conversation at a time.

ChatGPT gave the world its first taste of conversational AI that actually worked. You typed a question, got a surprisingly coherent answer, and the entire knowledge work industry collectively realized something fundamental had changed. Within 2 months of launch, ChatGPT reached 100 million users — the fastest consumer app adoption in history.

What people used it for:

- Writing emails and messages

- Answering questions (replacing some Google searches)

- Brainstorming and ideation

- Summarizing documents

- Simple code generation (copy-paste into your editor)

The experience: You opened a chat window. You typed. The AI responded. If the response wasn't right, you iterated. The conversation was the entire interface.

Key models launched: GPT-3.5 (Nov 2022), GPT-4 (March 2023), Claude 1 (March 2023)

The bottleneck that drove Phase 2: The AI could talk about doing things, but it couldn't actually do anything. "Write me a Python script" produced text. But you had to copy it, save it, run it, find the bugs, paste the errors back, iterate. The human was the execution layer every single time.

The bottleneck wasn't the AI's intelligence. It was its inability to touch the real world. It was a brain in a jar.

Phase 2 — 2024: The Tool-Use Era

The paradigm: AI that can use tools, not just generate text.

The industry's answer to the "brain in a jar" problem was function calling — giving AI models the ability to invoke external tools. Instead of describing what to do, the AI could now actually do it.

Key breakthroughs:

- Function calling / tool use — models learned to output structured tool calls instead of just text

- Code Interpreter — ChatGPT could write and execute Python code in a sandbox, see results, and iterate

- Plugins and integrations — AI could call APIs, search the web, read documents, access databases

- RAG — models could search knowledge bases before responding

What people used it for:

- Data analysis (upload a CSV, get charts and insights)

- Code generation with execution and iteration

- Web research with real-time search results

- Document processing and information extraction

The leap from chatbot to tool-using AI was the moment AI went from "talking about doing things" to actually doing them. OpenAI's function calling API (released June 2023) was the technical unlock that made this possible.

The bottleneck that drove Phase 3: Tool use was powerful but reactive. You still initiated every action. The AI would use tools when asked, but it didn't plan multi-step workflows without constant human direction. The AI had hands now — but it didn't have a plan.

Phase 3 — 2025: The Agent Era

The paradigm: AI that plans, executes, and iterates autonomously.

This was the year the industry went from "AI that uses tools when you ask" to "AI that figures out what tools to use, in what order, and handles the whole workflow."

Key breakthroughs:

- Autonomous agents — AI systems that receive a goal and execute multi-step plans

- Claude Code and OpenAI Codex CLI — coding agents that read codebases, write code, run tests, and iterate without human intervention

- Computer use — Claude gained the ability to operate a full desktop environment (click, type, navigate)

- Agentic frameworks — CrewAI, AutoGen, LangGraph made it possible to build custom agent workflows

- Persistent context — agents maintained state across sessions (memory systems, status files)

What people used it for:

- "Build me a user settings page" → agent plans, codes, tests, and delivers

- "Research our top 5 competitors" → agent visits websites, extracts data, writes a report

- "Fix the bugs in this issue tracker" → agent reads issues, finds code, writes fixes, runs tests

The bottleneck that drove Phase 4: A single agent trying to do everything hit a quality ceiling. An agent great at coding writes mediocre marketing copy. An agent context-loaded with code architecture can't simultaneously hold a content strategy in its 200K-token working memory. And one agent can only do one thing at a time — no parallelism.

By late 2025, developers reported that single-agent systems hit a "quality wall" on projects requiring more than 2 distinct skill domains. The solution required architectural change, not a better model.

Phase 4 — 2026: The Team Era

The paradigm: Coordinated teams of specialized AI agents working together.

This is where we are now. The insight: if agents work better when specialized, give each agent a specialty and let them coordinate. A 4-agent team (CEO + Developer + Marketer + Automator) outperforms a single agent by every measurable metric on complex, multi-domain work.

Key breakthroughs:

- Multi-agent orchestration — systems that coordinate multiple agents with different roles

- Inter-agent communication — message bridges, shared workspaces, and event systems

- Coding delegation — agents that spawn other agents for specialized tasks

- Model mixing — different agents running different models (e.g., Claude Sonnet for writing, DeepSeek V3 for routine tasks)

- Desktop applications — multi-agent systems running locally on your machine for $149 one-time, powered by runtimes like OpenClaw

What makes this phase different: It's not about smarter models. The individual models in 2026 aren't dramatically smarter than late 2025 models. What changed is how we organize them — specialization, coordination, memory, delegation produce dramatically better outcomes.

A team of Sonnet-powered agents with good architecture outperforms a single Opus agent trying to do everything. The system is smarter than its parts.

The Pattern: Each Phase Solves the Previous Bottleneck

| Era | Core Innovation | Bottleneck Solved | New Bottleneck Created |

|---|---|---|---|

| 2023: Chatbot | Conversational AI | Access to AI intelligence | Couldn't act — brain in a jar |

| 2024: Tool-Use | Function calling | AI could act, not just talk | Couldn't plan multi-step workflows |

| 2025: Agent | Autonomous planning | AI could work without step-by-step guidance | Couldn't specialize or parallelize |

| 2026: Team | Multi-agent coordination | AI could specialize and run in parallel | Next phase TBD |

Each phase didn't replace the previous one — it built on it:

- Teams still use tools (Phase 2 capability)

- Agents still have conversations (Phase 1 capability)

- Chatbots still answer questions

Each layer adds capability the layer below couldn't provide.

Understanding all four phases helps you deploy the right tool for each job — instead of forcing everything through a chat window or assuming "more powerful model" solves every problem.

What's Next: Predictions for 2027 and Beyond

Persistent Agent Identity

Today's agents wake up fresh each session and reconstruct context from files. Tomorrow's agents may maintain genuine continuity — memory of working relationships, learned preferences, accumulated expertise internalized through months of collaboration.

Agent-to-Agent Ecosystems

Currently, agents on different platforms can't collaborate. Standardized agent communication protocols (similar to HTTP for websites) could enable cross-organization agent collaboration — your Marketer agent coordinating with a partner company's PR agent.

The Human Role Shifts (Again)

- Chatbot era: you were the writer, the AI was the editor

- Tool era: you were the operator, the AI was the assistant

- Agent era: you were the manager, the AI was the worker

- Team era: you're the executive, the AI is the team

- Next phase: you may become the board member — setting strategy while the AI team handles execution and tactical decision-making

Where You Should Be Right Now

If you're still in the chatbot phase — opening ChatGPT, typing questions, copy-pasting answers — you're leaving enormous capability on the table.

The jump from chatbot to agent team isn't gradual. It's a step function in productivity. The sooner you make the jump, the longer you compound the advantage.

You don't need to understand every technical detail to make the jump. Delegate your first real task to an AI team and see what comes back. That experience changes your mental model more than any blog post can.

Key Takeaways

- AI has evolved through 4 phases in 3.5 years: Chatbot → Tool-Use → Agent → Team, each solving the previous phase's bottleneck

- The Team era (2026) isn't about smarter models — better system design (specialization, coordination, parallelism) is the actual driver

- Each phase builds on the previous one; understanding all 4 helps you deploy the right AI approach for each job

- The jump from chatbot to agent team is a step function in productivity, not an incremental improvement

- The human role keeps shifting upward: writer → operator → manager → executive

Frequently Asked Questions

What's the difference between ChatGPT and an AI agent? ChatGPT is a chatbot — it responds to questions but can't take actions in the real world without you executing the output. An AI agent combines a language model with tool access (code execution, web browsing, file operations) and autonomous planning. Instead of describing what to do, an agent does it. Agents can run for minutes or hours on a task without human intervention at each step.

What is an AI agent team? An AI agent team is a group of specialized AI agents — each with a specific role (e.g., CEO, Developer, Marketer, Automator) — that coordinate through a message bridge to complete complex, multi-domain projects. Unlike a single agent trying to do everything, each team member focuses on its specialty and hands work to the next agent at the appropriate time. Teams run agents in parallel, dramatically reducing project completion time.

When did AI agents become practical? AI agents became practically useful in 2025 with the release of Claude Code, OpenAI's Codex CLI, and mature agentic frameworks (CrewAI, AutoGen, LangGraph). These tools let agents plan multi-step workflows, execute code, browse the web, and maintain context across sessions. Multi-agent teams became accessible to non-developers in 2026 with desktop products like noHuman Team — built on OpenClaw, the open-source agent runtime that provides containers, memory, channels, and coding delegation out of the box.

How much better are AI agent teams than single agents? For complex, multi-domain projects, AI agent teams are dramatically better. A 4-agent team running in parallel can complete a product launch (landing page + email sequence + deployment pipeline) in 2–3 hours. The equivalent single-agent work takes 8+ hours with significant quality degradation as the context window fills. For simple single-domain tasks, a single agent is still perfectly capable.

What is the next phase of AI after agent teams? The likely next phases are persistent agent identity (agents that maintain continuity across months, not sessions), agent-to-agent ecosystems (standardized protocols for agents from different organizations to collaborate), and proactive AI (agents that initiate actions based on monitoring, not just respond to requests). The human role will likely shift from executive to board member — setting strategy while AI handles execution and tactical decisions.

Ready to make the jump to noHuman Team? Download noHuman Team — powered by OpenClaw, four noHumans (CEO, Developer, Marketer, and Automator) working together on your machine, controlled from Telegram or your terminal. $149 one-time, no subscriptions.

Related posts

Telegram Bot for Business: Control Your AI Team from Your Phone

How to use Telegram to manage AI agents from your phone. Set up bots, delegate tasks via DM, and monitor your AI team on the go.

AI for Solopreneurs: Build a Virtual Startup Team for $149

How solopreneurs use a 4-agent AI team to handle development, marketing, and automation — replacing freelancers at a fraction of the cost.

AI Content Production at Scale: How Agent Teams Write, Edit & Publish

How multi-agent AI teams produce content at scale — from brief to published. Workflows, quality control, and output compared to human writers.